Artificial intelligence is a big trend right now, especially machine learning. The amount of available data on the internet is growing everyday so it makes sense that we would want to analyze it and turn it into useful information. Any insight that we can get from it will be helpful to discover new ways to enrich user experience in our apps.

What is machine learning?

Machine learning is a science that allows computers to learn without being explicitly programmed. Many of the techniques used to accomplish this actually try to mimic the processes used by the human brain, like neural networks, or the process of natural selection, like genetic algorithms.

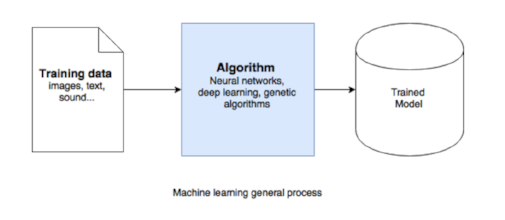

The general process, regardless of the algorithm chosen, consists of taking a set of raw data or “training data” and feeding it to the algorithm so that it can learn and then produce a trained model. This trained model will later serve as a tool to make inferences about new input data.

The training data could be anything from images to sound or text. This makes it very natural to think of it in a mobile context. At the 2017 WWDC, Apple introduced Core ML, a framework that makes incorporating trained models into our app very easy.

How to use Core ML?

The first step to using this tool is to download one of the available trained models from Apple. If you have a custom model you can also turn it into the CoreML format with Core ML tools or Apache MxNet.

For the purpose of this example let’s download MobileNet, an open-source model for efficient on-device vision. According to Apple’s description, it detects the dominant objects present in an image from a set of 1,000 categories such as trees, animals, food, vehicles, people, and more.

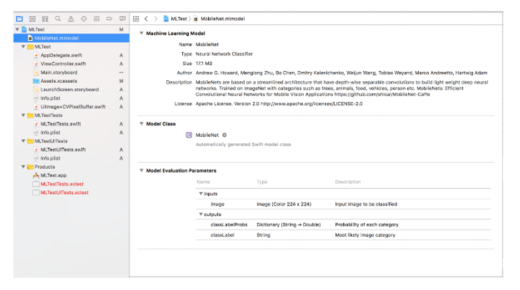

After downloading the model, all you have to do is drag it to Xcode 9.0 anywhere under the project folder and it will recognize it. Make sure that the model is part of the main target so that Xcode also creates a swift class for it. Once loaded, you can see the characteristics of the model as well as the inputs and outputs it will take and produce.

Once the model is ready, in order to get predictions all you have to do is instantiate it and set the input data. MobileNet takes images as input and it will produce two different outputs: one that is called 'classLabel,' a String that contains which one is the most likely image category, and the second 'classLabelProbs,' a dictionary containing all the possible categories along with the probability for each one.

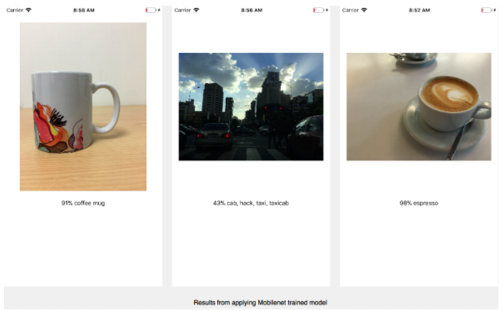

Let’s run a test with different pictures to see the results

Results

As we can see by the results, this model does extremely well with clear and prominent subjects but with busy photos it struggles a bit. However, these results are impressive for out-of-the-box functionality.

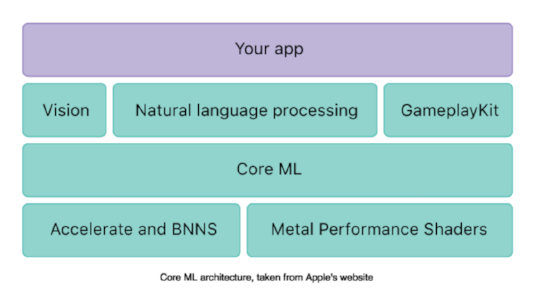

The applications for machine learning are endless. They include things like sentiment analysis, language detection and voice recognition.Core ML Apple also released Vision, a framework that allows you to apply computer vision techniques on the device. This, with the existing NSLinguisticTagger for natural language processing, makes it so much easier to do many things like recognize dominant language in a text, identify names or places within a string, and even classify nouns, verbs, adjectives and other parts of speech.

These new APIs are built upon Metal Performance Shaders and Accelerate which work directly on the device and address privacy, data consumption and availability concerns.

Takeaway

All of these APIs make incorporating machine learning into your app very easy and straightforward. It can only benefit us to harness the power of this data and enhance the user experience in our apps.